There’s a popular caricature in the AI-for-software debate: that platforms are promising you can type one magical prompt and skip requirements discovery, architecture, testing, security, deployment, and engineering judgment.

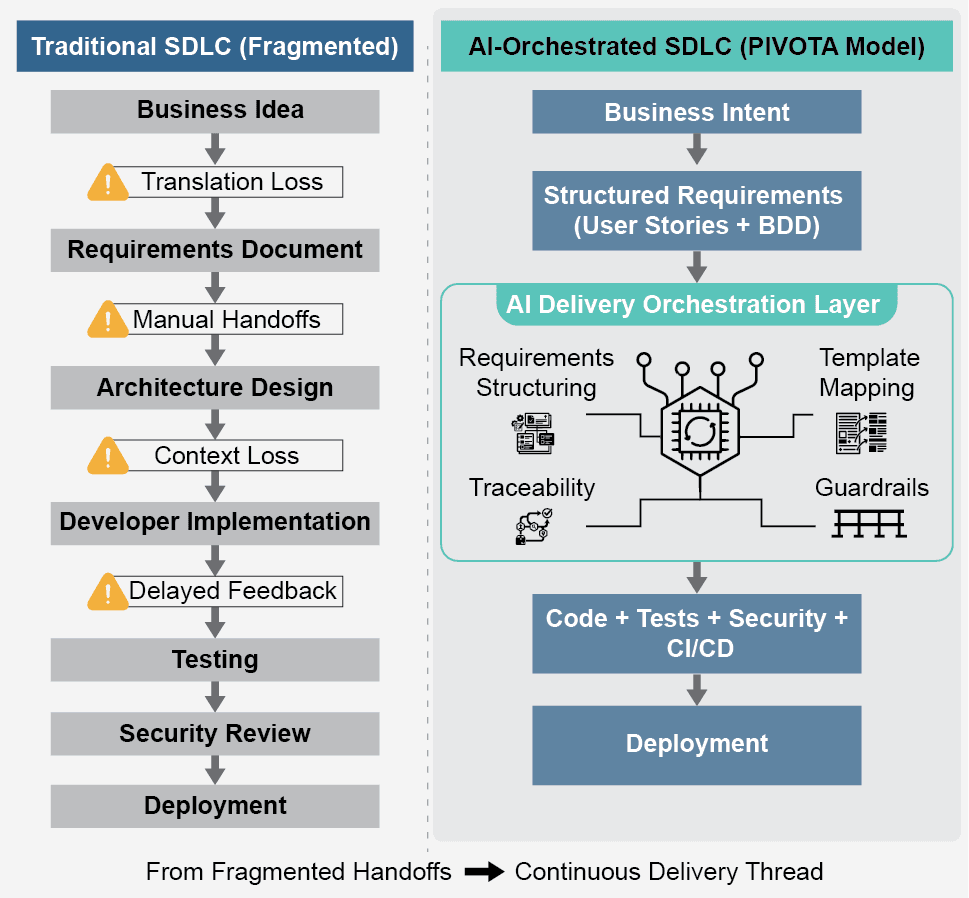

That strawman is easy to dismiss, but it’s not what platforms like PIVOTA are actually trying to do. The real goal is to reduce translation loss between business intent and technical execution by structuring requirements, orchestrating delivery across existing tools, and keeping traceability, review, and governance intact end-to-end.

1) Compression isn’t elimination

A common critique confuses lifecycle compression with lifecycle elimination. No serious enterprise platform claims requirements, design, validation, and operations simply disappear.

The claim is more practical: the handoffs between stages can be made more coherent, less lossy, and more machine-assisted. In PIVOTA’s model, plain-language ideas become structured user stories and BDD-style acceptance criteria (synced into tools like Jira or Git Boards), then move through a governed path to deployment on top of existing CI pipelines and containerized environments.

Bottom line: the SDLC isn’t removed, it’s orchestrated.

2) Architecture already lives in “golden paths”

Another weak spot in the debate is the hard separation between “implementation” (which AI can accelerate) and “architecture” (which AI supposedly can’t touch). In real enterprises, much of architecture is codified before any individual engineer starts typing, through platform engineering.

- Skeleton source code and scaffolds

- CI/CD pipeline templates

- Infrastructure-as-code templates

- Policy guardrails

- Logging, monitoring, and observability defaults

Once architectural intent is captured in reusable patterns, applying it becomes more bounded and repeatable, which is exactly where AI systems can help teams be more consistent.

3) AI doesn’t replace architects, it operates inside guardrails

None of this means “AI becomes the architect.” Deep judgment still matters for failure domains, regulatory tradeoffs, and long-term organizational design.

But inside approved boundaries, AI can participate by doing things like:

- Generating first-pass patterns and scaffolds

- Mapping requirements to sanctioned templates

- Surfacing missing constraints and edge cases

- Carrying intent forward into code, tests, and delivery pipelines

The emerging model is not “AI as sovereign architect.” It’s AI inside a governed engineering system: specialist workflows, handoffs, guardrails, tracing, centralized policy enforcement, and reviewable outputs.

4) Governance can get better, not worse

A fair worry with AI-native SDLC platforms is governance: unmanaged generation can weaken accountability.

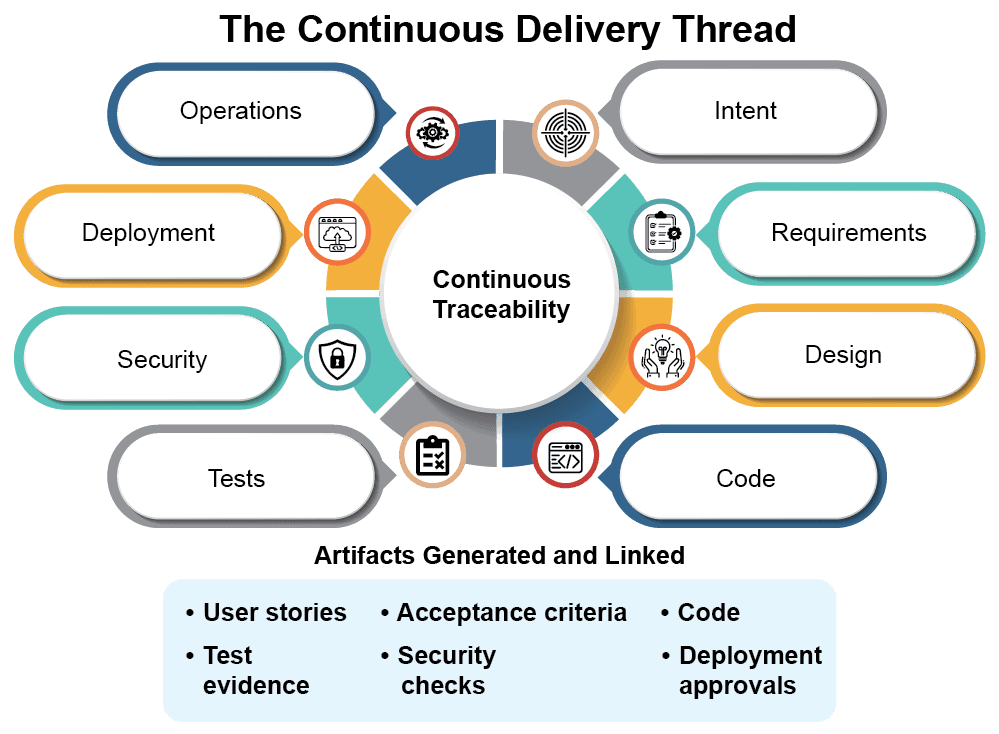

But governed orchestration can do the opposite. When requirements, acceptance criteria, generated artifacts, test evidence, approvals, and deployment checks are connected in one delivery thread, traceability improves rather than degrades.

PIVOTA positions itself around continuous traceability, governance, and compliance built into each step, designed for regulated, multi-team environments. For example, in a permit-processing platform effort, the workflow converted inputs into structured stories, tests, and application code while maintaining clear traceability and human review throughout.

That’s not proof every vendor has solved governance, but it does push back on the idea that these platforms “just generate software” and leave nothing behind for audit.

5) The real critique: show the evidence

Where skeptics have a legitimate point is evidence. Many platforms have stronger narratives than public proof.

PIVOTA claims faster delivery, fewer defects, instant traceability, and throughput gains. It’s reasonable to ask for clearer methodology, broader deployment evidence, and independent validation.

That’s a different argument than pretending the category’s promise is “one prompt and software engineering disappears.”

Conclusion: From prompts to governed delivery

The transformation unfolding isn’t simply about replacing engineers with AI prompts, it’s a fundamental leap from disconnected, manual, and retrospective processes to a unified delivery ecosystem. In this new paradigm, intent, structure, generation, validation, and governance converge earlier, forging a seamless thread that enhances clarity and accountability at every stage. The result is not just efficiency, but a deeper alignment between business goals and technical execution.

The crucial question isn’t about whether AI platforms will render architects irrelevant. It’s about whether these tools can elevate engineering decisions, making them more transparent, consistently repeatable, and thoroughly auditable, while ensuring that humans remain at the helm for the most critical and complex tradeoffs. This shift doesn’t diminish expertise; it amplifies it by embedding responsibility and insight within every decision point.

If you’re assessing AI-native delivery tools, don’t just ask if they automate tasks, ask where your software development lifecycle already has standardized practices, and how enhanced traceability could fundamentally alter your outcomes. The organizations that harness this new traceability will be best equipped to drive innovation, meet regulatory demands, and build trust in their products.